I can’t believe I’ve had an original idea in wanting a face system monitor. The face would change, skin color, eye size, ear flapping, mouth screaming, whatever, and the user could configure it how they wanted. Then I could get a quick system overview just by looking at the face in the corner of my screen. I’d code it up myself, but for 2 reasons. I think I’d tire of it quickly, as badly as I want to see it. I don’t even know where to start with coding anything more than some bash scripting.

That’s an interesting idea, but may suffer from the all-too-familiar programmer art (that is, most programmers aren’t great at art).

In its simplest form, it probably wouldn’t be difficult to use something like emojis to indicate state. Honestly wouldn’t be that hard to code. After a quick search, I even found a guide on how to do almost exactly what you’re looking for.

most programmers aren’t great at art

Yeah, it would be nice to have an animator and a programmer work together for stuff like this.

On the other hand, Blender has Python scripting, meaning a lot of Blender users would be capable of that.

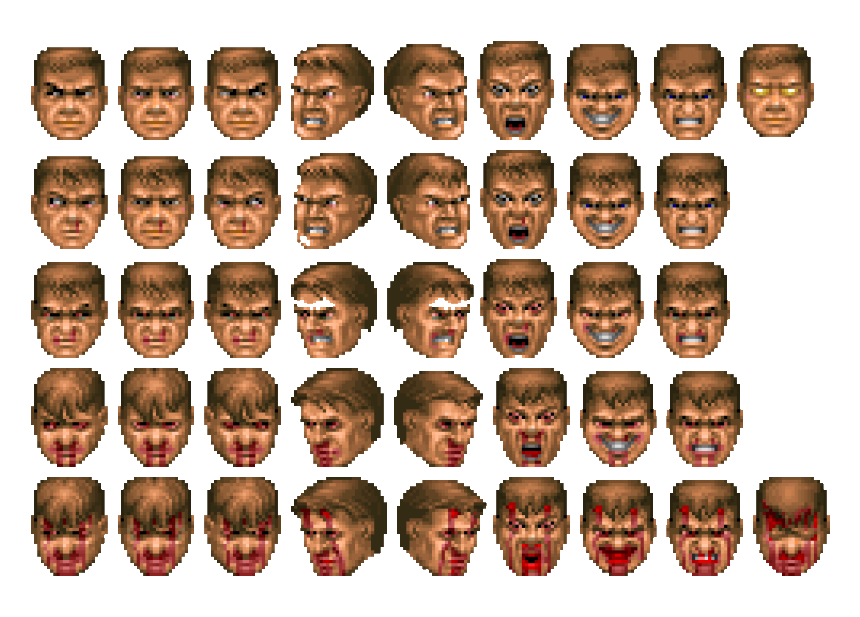

So all that is required for a programmer is to create Python endpoints and then provide a similar interface that would work on pre-rendered graphics.I think I got the art part settled: https://www.spriters-resource.com/ms_dos/doomdoomii/asset/27876/

If no one else does this, I think I might

Well darn, that link doesn’t work for me.

If you’re referring to LinuxVox, it no longer works for me either. I’m pretty sure it’s an AI content mill anyway.